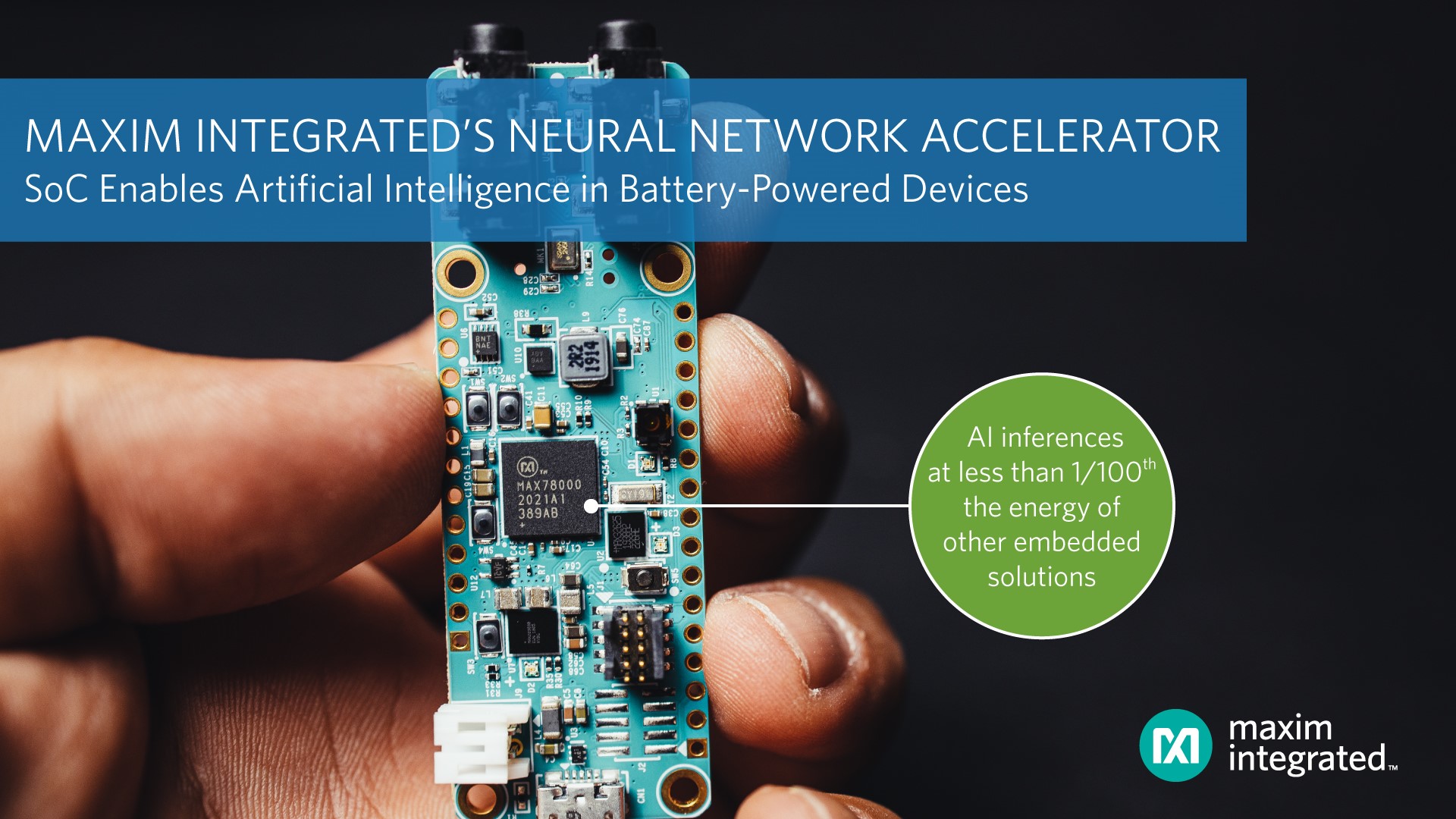

Executing AI inferences at less than 1/100th the energy of other embedded solutions to dramatically improve run-time for battery-powered AI applications, helped earn Maxim Integrated’s MAX78000 low-power, neural network, accelerated MCU a LEAP Award in Embedded Computing.

More than 100 entries were received for the annual competition which celebrates the most innovative and forward-thinking products serving the design engineering space. This year’s winners were chosen by an independent judging panel of 12 engineering and academic professionals.

More than 100 entries were received for the annual competition which celebrates the most innovative and forward-thinking products serving the design engineering space. This year’s winners were chosen by an independent judging panel of 12 engineering and academic professionals.

The MAX78000’s power improvements come with no compromise in latency or cost: the MAX78000 executes inferences 100x faster than software solutions running on low power microcontrollers, at a fraction of the cost of FPGAs or GPUs.

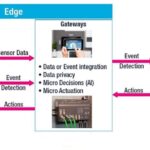

By integrating a dedicated neural network accelerator with a pair of microcontroller cores, the MAX78000 overcomes poor latency and energy performance typical of other low-power MCUs used to implement simple neural networks by enabling machines to see and hear complex patterns with local, low-power AI processing that executes in real-time. Applications such as machine vision, audio and facial recognition can be made more efficient since the MAX78000 can execute inferences at less than 1/100th energy required by a microcontroller. At the heart of the MAX78000 is specialized hardware designed to minimize the energy consumption and latency of convolutional neural networks (CNN). This hardware runs with minimal intervention from any microcontroller core, making operation extremely streamlined. Energy and time are only used for the mathematical operations that implement a CNN. To get data from the external world into the CNN engine efficiently, customers can use one of the two integrated microcontroller cores: the ultra-low power Arm Cortex-M4 core, or the even lower power RISC-V core.

By integrating a dedicated neural network accelerator with a pair of microcontroller cores, the MAX78000 overcomes poor latency and energy performance typical of other low-power MCUs used to implement simple neural networks by enabling machines to see and hear complex patterns with local, low-power AI processing that executes in real-time. Applications such as machine vision, audio and facial recognition can be made more efficient since the MAX78000 can execute inferences at less than 1/100th energy required by a microcontroller. At the heart of the MAX78000 is specialized hardware designed to minimize the energy consumption and latency of convolutional neural networks (CNN). This hardware runs with minimal intervention from any microcontroller core, making operation extremely streamlined. Energy and time are only used for the mathematical operations that implement a CNN. To get data from the external world into the CNN engine efficiently, customers can use one of the two integrated microcontroller cores: the ultra-low power Arm Cortex-M4 core, or the even lower power RISC-V core.

We’ve cut the power cord for AI at the edge,” said Kris Ardis, executive director for the Micros, Security and Software Business Unit at Maxim Integrated. “Battery-powered IoT devices can now do much more than just simple keyword spotting. We’ve changed the game in the typical power, latency and cost tradeoff, and we’re excited to see a new universe of applications that this innovative technology enables.”

The judges commented: “Very impressed by executing AI inferences at less than 1/100th the energy of other embedded solutions, improving run-time for battery-powered AI applications.” Congratulations!

Leave a Reply