Eyeris Technologies, Inc. announced the commercial availability of its expanded EyerisNet product portfolio, with the industry’s first comprehensive In-vehicle Scene Understanding (ISU) AI and Interior Image Segmentation software to achieve the most accurate understanding of the automotive interior cabin space, at the International Consumer Electronics Show (CES) 2019. Eyeris will showcase, by-invitation-only, demonstrations of its technology and products at its private suite and in its Tesla Model S demonstration vehicle at the Westgate Las Vegas during CES 2019.

Eyeris Technologies, Inc. announced the commercial availability of its expanded EyerisNet product portfolio, with the industry’s first comprehensive In-vehicle Scene Understanding (ISU) AI and Interior Image Segmentation software to achieve the most accurate understanding of the automotive interior cabin space, at the International Consumer Electronics Show (CES) 2019. Eyeris will showcase, by-invitation-only, demonstrations of its technology and products at its private suite and in its Tesla Model S demonstration vehicle at the Westgate Las Vegas during CES 2019.

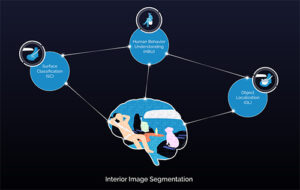

Eyeris’ vision AI algorithms use multiple automotive-grade 2D RGB-IR image sensors to provide real-time analytics on the edge, powered by its proprietary AI chip. The expanded EyerisNet portfolio includes:

- Interior Image Segmentation provides a ‘pixel map’ where every pixel in the vehicle interior scene is associated with a class label such as human, object or surface, along with their corresponding regions and contours for greater interior scene understanding accuracy.

- Human Behavior Understanding (HBU) AI provides body tracking, action and activity recognition, face analytics and emotion recognition for all occupants inside the vehicle.

- Object Localization provides detection, classification, size and position of objects.

- Surface Classification provides identification and position of all in-cabin surfaces (such as footwells, door panels, center console, etc.), relative to occupants and objects.

At CES 2019, Eyeris also introduces its proprietary AI chip—a compact, scalable hardware solution specifically designed to inference the entire EyerisNet suite of Deep Neural Networks from multiple 2D cameras, for real-time edge computing. The automotive-grade ASIC is AEC-Q100 qualified and power-efficient, consuming less than 7 watts.

With the arrival of the camera inside the vehicle cabin, the market is quickly heading to multi-camera systems, moving beyond driver monitoring toward an overall in-vehicle scene understanding. “Eyeris offers the only technology in the world capable of providing analytics on everything that occurs inside the vehicle with our vision AI software portfolio and AI chip,” Alaoui said. “With our analytics, passive safety systems can be transformed into active, intelligent safety systems, such as smarter deployment of airbags based on an occupant’s size, height and seating position and orientation. We refer to this as real-time intuitive safety and comfort controls. All of this runs on the edge while protecting occupants’ privacy.”

Leave a Reply