Autonomous vehicles must operate in a world of uncertainties. Validation platforms are now capable of determining whether behavior is correct given complex contextual attributes.

David Fritz, Mentor, A Siemens Business

There is an interesting dashcam video circulating in the autonomous vehicle (AV) development community of a Tesla-paid test driver coming up on what the driver calls the curve of death. He has gone through this particular curve numerous times with the autopilot feature turned on. In the video, the car starts out driving too close to the left-hand side of the curve. The Tesla is going so slowly that the driver worries aloud about the semitruck behind him. He’s concerned that the truck might try to pass him on the right though the vehicles are on what is supposed to be a single lane. As the Tesla goes through the curve, it is way off into what NASCAR calls the marbles, small bits of debris that come off tires and vehicles and accumulate near the outside wall of a track. Finally, the Tesla gets around the curve and the driver disengages the autopilot.

The point of the video is that the actual state of AV technology is far behind what you might think from reading accounts in the press. Clearly, we have a long way to go before AV technology is ready for prime time. Some of the reasons for this is the sheer complexity of the task. It involves sensing technology that includes cameras, lidar and radar, and a complicated decision-making process that goes into managing vehicle steering, braking, and engine control.

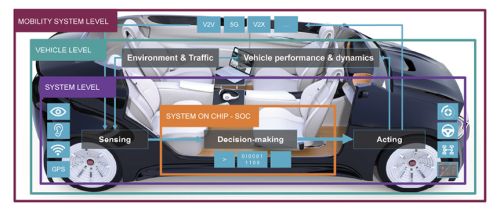

The system level refers to the ICs and software that implements the decision making, the sensors, and the actuation mechanics for steering, engine control, and so forth. Today, the decision-making at the system level generally employs special-purpose SoCs that combine multiple CPU cores with Graphics Processing Units (GPUs), Digital Signal Processors (DSPs), Field Programmable Gate Array (FPGAs), Video Processing Units (VPUs), Image Processing Units (ISPs), Neural Processing Units (NPUs), and other kinds of computational accelerators.

Many automotive equipment and vehicle manufacturers are now developing or are contemplating developing custom SoCs for decision making rather than rely on commercially available ICs. The reasons include optimization for their platform, differentiation from other vendors, and to reduce dependence on traditional semiconductor vendors.

A case in point is Tesla, which says its own neural processing chipset performs 36.8 Tera Operations per Second (TOPS) while consuming 72 W. The overall performance of the Tesla custom chipset falls well short of the Nvidia Drive AGX Pegasus platform, a commercial device. But analysts figure Tesla’s approach saves 20% over the cost of the Nvidia platform.

Sitting one level above the system level is the vehicle level. It covers inputs about the environment in which the vehicle finds itself such as nearby traffic. It also factors in knowledge about the vehicle performance and dynamics that the system level also uses for decision making.

Sitting above the vehicle level is the mobility level. It encompasses communication technologies that include V2V, 5G, V2X, and so forth, that are used to connect individual vehicles to infrastructure, other vehicles, and data sources.

The mobility system level, vehicle level, and system level functions are all highly interdependent. This interdependency means that developers can’t use conventional development techniques to devise an AV. In the old days, developers might first enumerate requirements, decompose the requirements into basic functions, do a few Matlab models, generate some C code, slap the code on a microcontroller, and call the project done. But complicated interdependencies prevent AVs from fitting nicely into this mold.

What’s changed in AV technology is not the requirements so much as how we implement or fulfill them. That’s where a lot of the emphasis on SoCs in decision making is starting to come into play.

It’s helpful to talk about specific examples to illustrate the interactions involved in decision making. Imagine your vehicle driving along at 130 km/hr when the AV system sees what it thinks is a baby in the road. Any human would know that the object is probably not a baby, and they would probably want a closer look before making a decision.

But the AV system “knows” the best thing to do is to avoid hitting a baby. That’s it’s number-one priority. There is a car in the blind spot that the AV knows about, but it decides that statistically, the better decision is to change lanes to avoid hitting the baby because it cannot stop in time. Hopefully, the car next to it will adjust and the AV avoids hitting the baby.

The scenario we’ve just outlined actually happened. Unfortunately, it did not have a happy ending. The car didn’t simply move over to miss the baby. It actually swerved, lost control, and hit a tree, causing injuries. And it turned out the baby in the road was actually a cat.

Several improvements to AV technology will be needed to head off bad outcomes like this one. One such improvement is to model vehicle dynamics at a higher level of precision. Consider another scenario where a car comes around the corner and its tires hit a slick spot, lose some traction, and cause an accident. This accident is avoidable if the modeling process is accurate enough to anticipate potential problems like a loss of traction.

More specifically, if you only look at an abstract virtual prototype of the car as it goes through a corner, it may seem like everything is good. But a higher-fidelity model of the vehicle dynamics gives a more accurate picture of how the vehicle will behave. Models of vehicle dynamics with a high level of accuracy lead to a close calibration of the digital twin with the physical platform. A typical way of calibrating the two is to run a set of scenarios through the digital twin, then mockup the same scenarios on a track. Developers can have a high level of confidence in a digital twin that behaves identically to the real vehicle given those conditions.

A high-fidelity digital twin allows developers to run what are known as corner-case scenarios – scenarios involving a problem or situation that arises only outside of normal operating parameters, and specifically one that happens when multiple environmental variables or conditions are simultaneously at extreme levels, though each parameter is within its specified range.

Classic examples of corner cases include the experience of Waymo in Australia when its AVs didn’t behave properly because they didn’t know what a kangaroo was. Knowledge about kangaroos is important for cars in Sidney, less so for vehicles in San Francisco. On the other hand, it is probably more important for AVs traversing bridges in San Francisco to recognize when an earthquake is underway.

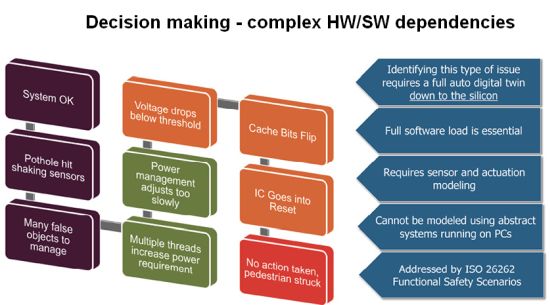

High-fidelity digital twins can be used to explore AV performance in corner cases, but it is useful to understand just what factors separate a “high fidelity” digital twin from a more generic version. Perhaps the clearest way of explaining the difference between the two is through another example. Imagine an AV driving down the road that hits a sizeable pothole. The pothole shakes the

A high-fidelity digital twin able to model this scenario can only do so by simulating events all the way down to the silicon level, literally running detailed simulations of chip operations. In contrast, it makes little sense to simulate the hitting of a pothole on PCs in a server farm. The average AV likely will generate between 2TB to 4TB of data an hour via its sensors and processors. All this data must be processed and then acted upon in real-time. A data center, in comparison, would require a minimum of a dual-socket x86 server with multiple GPU or FPGA accelerators to process this much data in real-time. The server farm approach might be good for getting the software up and running but not for verifying the correct behavior of the system itself.

It is also worth commenting on some of the other nuances that distinguish high-fidelity digital twins. For example, the simulation must run the full software load – you can’t stub out parts of the software for expedience. Ignoring one sensor for testing purposes changes the behavior of the whole system. And high-fidelity models must include the electromechanical actuation functions and the system dynamics of all the electromechanical components themselves. This level of detail simply can’t take place on remote PCs.

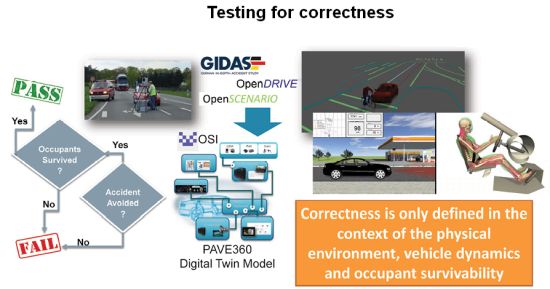

Once you have a digital twin with high fidelity, it becomes possible to use public databases — now being compiled by governmental bodies and insurance companies — as a source of verification scenarios. An example is Gidas, for German in-depth accident study. Gidas is one of the most intensive research projects for road traffic accident studies worldwide. It is a cooperative project between the Federal Highway Research Institute and the German Association for Research in Automobile Technology. Gidas analyzes an accident with about 3,600 parameters and includes information such as the condition of the wheels, the setting of the car in general, and the condition of the safety systems during the accident.

High-fidelity digital twins also open up the possibility of creating what is called scenario closure – coming up with scenarios that have yet to be imagined. It may be possible for intelligent machines to devise corner cases that humans are unlikely to think of and which could not be tested in a physical platform.

High-fidelity digital models can also model the passengers themselves, thus providing a simple criteria for passing one of the scenarios: Did you avoid the accident? If you did, did the occupants survive? This distinction is important because is easy to make a move in an AV that would actually injure passengers. The easiest decision might not be the best decision in terms of passenger survivability.

Modern tools

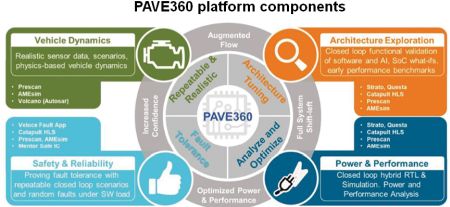

Fortunately, tools are being developed to aid in the production of digital twins with sufficient fidelity for AV testing. One example is a platform called PAVE360. The developer of PAVE360 is Siemens, which describes PAVE360 as a mixed-fidelity pre-silicon verification and validation environment. More specifically, PAVE360 is a simulation framework that allows semiconductor designers to develop and test high-performance virtual SoCs within a virtual car driving in a virtual world under controlled and repeatable virtual conditions.

These tools let PAVE360 model high-performance SoCs capable of high bandwidth sensor fusion, real-time AI neural network processing, and automotive safety-certified control solutions in conjunction with the software and the rest of the vehicle platform. While the main function of PAVE360 is to enable the design of SoCs, it also helps automotive systems designers simultaneously develop silicon, software, and electromechanical components using the FMI/FMU, TLM2.0 and Simulink standards.

Another noteworthy aspect of PAVE360 is its extensive libraries which allow designers to develop or import models for silicon or electromechanical components ranging from sensors to ECUs. The models can be changed and tested during development of the individual components. Once the component designs are complete, high-fidelity models of each component are used for verification testing.

As you might suspect, PAVE360 is designed around the functional safety standards for automotive applications including ISO-26262, the functional safety standard for electrical and electronic systems, and ISO-21448, the safety standard for the intended functionality of the vehicle. This helps designers ensure the entire platform meets required safety standards before committing any portion of the platform to production.

All in all, correct behavior in AVs can only be defined in the context of the physical vehicle itself and the environment within which it is being driven. AV subsystems are integrated so a bad decision by one subsystem affects all the others. One subsystem can’t overcome mistakes made by others if they’ve been tested separately. But a high-fidelity digital twin can use scenario databases or formal methodologies to show how the physical platform will behave in ways that are difficult to test on the road.

References

Autonocast podcast episode, David Fritz and Jim McGregor on developing chips for AVs , http://www.autonocast.com/blog/2019/5/22/144-david-fritz-and-jim-mcgregor-on-chips-for-autonomous-vehicles

Leave a Reply