Retrieval-augmented generation (RAG) and table-augmented generation (TAG) are both techniques to improve the ability of artificial intelligence (AI) to generate accurate and relevant information by leveraging external data. Other choices include retrieval-augmented fine-tuning (RAFT) and retrieval-centric generation (RCG).

Understanding when to use RAG, TAG, RAFT, and RCG can be crucial to successful and efficient AI implementations. All are focused on improving the performance of large language models (LLMs). LLMs generate responses based on training data that may be outdated or incomplete. RAG, TAG, RAFT, and RCG are ways to address those limitations.

RAG is focused on retrieving and incorporating information from unstructured data sources like documents and web pages. TAG is focused on querying and leveraging structured data from databases.

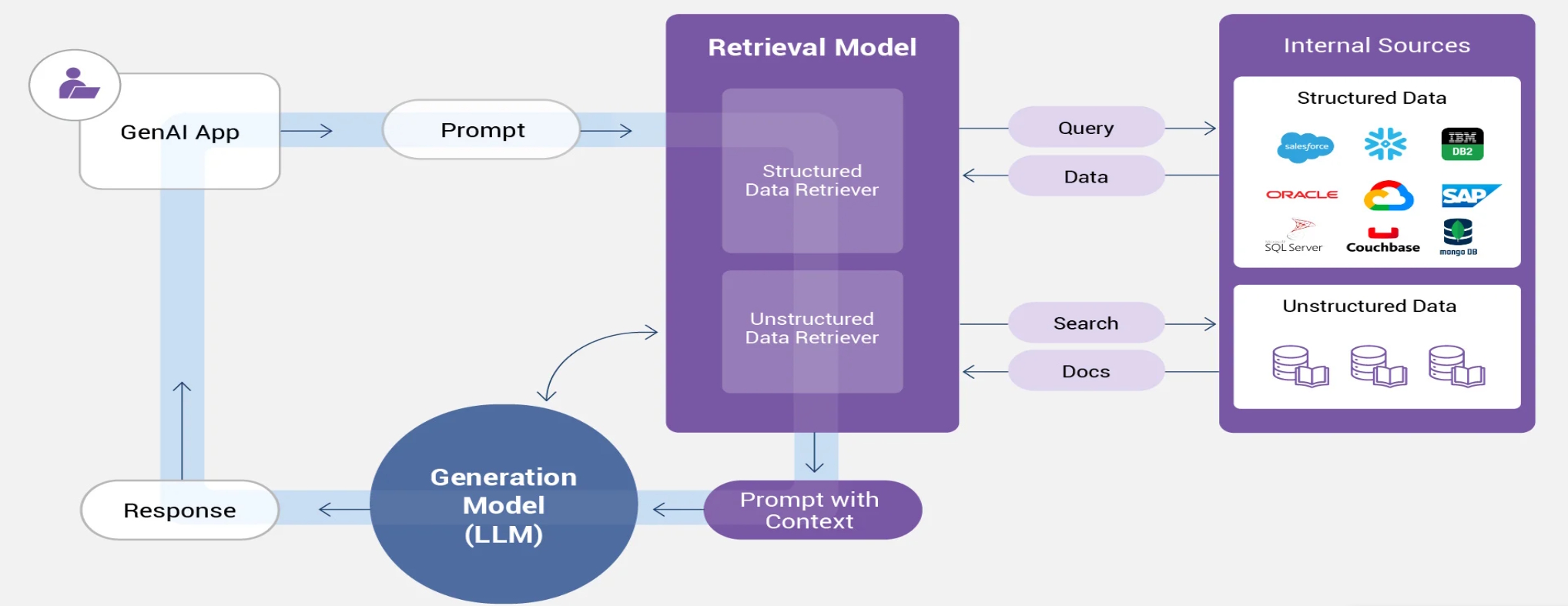

RAG begins with the addition of new information beyond the original training dataset, usually gathered from external sources. When a query is submitted, it’s converted into a vector representation, like the operation of generic LLMs. The vector representation is matched to stored vectors in the knowledge database.

LLMs are inherently non-deterministic and might not produce the same output for a given query. Prompt engineering is needed for producing consistent responses. In RAG, prompt engineering is used to incorporate relevant external data to enhance the model’s contextual understanding with the goal of producing more detailed and (hopefully) insightful responses.

A key to optimal RAG operation is keeping the external database as current as possible with periodic updates. That enables the system to deliver the most relevant responses, even in the absence of time-consuming and expensive training updates (Figure 1).

How does TAG work?

While RAG is especially useful for accessing information not present in the original training dataset, TAG can be used in applications like enhancing search engine capabilities, especially in scenarios involving structured data and complex queries. TAG is implemented in a series of steps (Figure 2).

- The user submits a query, for example, to a search engine.

- The system identifies and retrieves relevant data, possibly using SQL queries to find specific information in a table or database.

- Prompt engineering is used to incorporate the retrieved data into the user query, creating a more detailed ‘augmented prompt.’

- The augmented prompt is used by the LLM to generate a response that is more precise and focused than possible using only the original query.

TAG is more appropriate than RAG for applications like querying databases and filtering data based on multiple criteria. TAG is less computationally intensive than RAG and can be more efficient when dealing with large datasets and complex queries.

Improving RAGs

RAFT and RAG are both approaches that leverage external knowledge to improve LLM performance. RAG dynamically integrates external data sources into the LLM’s response generation process.

Fine-tuning is a type of continuous improvement of the LLM itself. RAFT involves additional training of an LLM to improve its performance on a particular task or in a specific domain. The process modifies the LLM’s internal parameters to better align with the nuances of a specific task.

RAFT can be especially useful in situations involving dynamic information environments and applications that require nuanced responses. However, it requires high-quality data and is computationally demanding. If not properly implemented, it can lead to the loss of previously learned general knowledge, called catastrophic forgetting.

Retrieval-centric generation

RCG is another approach to improving the performance of LLMs. It’s particularly used for interpreting complex indexed or curated data. RAG and RCG can both be used to get information from curated sources during inference. While the model is the major source of information in RAG and is aided by the incremental data, in RCG, most of the data is external to the model.

Instead of augmenting LLM performance, RCG focuses on prioritizing data to constrain the response (Figure 3).

- RAG is designed for tasks that need to combine general knowledge with specific information from external data sources and answer complex questions.

- RCG is optimized for maintaining context, style, and accuracy of the original information, such as summarization, paraphrasing, or creating consistent content.

Summary

RAG is designed for using information from unstructured data sources like documents and web pages. TAG is focused on querying and leveraging structured data from sources like tables or databases. Extensions of RAG include RAFT, which provides additional training of an LLM to improve its performance on a particular task or in a specific domain, and RCG, which maintains the context, style, and accuracy of the original information.

References

From RAG to TAG — Document-Centric RAG to Table Augmented Generation, Gigaspaces

GenAI Architecture Shifting from RAG Toward Interpretive Retrieval-Centric Generation (RCG) Models, Intel Labs

RAG vs. Fine-Tuning: How to Choose, Oracle

RAG vs TAG: A Deep Dive, 2090 OK

Retrieval Augmented Fine-Tuning: Adapting LLM for Domain-Specific RAG Excellence, Galileo

What is Agentic RAG, Weaviate

What is RAG, IBM

What is Retrieval-Augmented Generation (RAG)?, GeeksforGeeks

What is Table Augmented Generation (TAG)?, K2view

EE World related content

How do AI agents and model context protocol work together?

What’s the difference between GPUs and TPUs for AI processing?

How can neuromorphic devices be harnessed in edge AI computing?

What is the mathematics behind artificial intelligence?

What determines the size of the dataset needed to train an AI?

Leave a Reply