Deeplite announced Deeplite Runtime (DeepliteRT), a new addition to its platform that makes AI models even smaller and faster in production deployment, without compromising accuracy. Customers will benefit from lower power consumption, reduced costs and the ability to utilize existing Arm CPUs to run AI models.

Deeplite announced Deeplite Runtime (DeepliteRT), a new addition to its platform that makes AI models even smaller and faster in production deployment, without compromising accuracy. Customers will benefit from lower power consumption, reduced costs and the ability to utilize existing Arm CPUs to run AI models.

As organizations look to include more edge devices in their AI and deep learning strategies, they are faced with the challenge of making AI models run on small edge devices, including security cameras, commercial drones, and mobile phones, that often have very limited power budgets and processor resources. DeepliteRT solves this challenge with an innovative way to run ultra-compact quantized models on commodity Arm processors, while at the same time maintaining model accuracy.

Deeplite has partnered with Arm to run DeepliteRT on its Cortex-A Series CPUs in everyday devices such as home security cameras. Businesses can run complex AI tasks on these low-power CPUs, eliminating the need for expensive and power-hungry GPU-based hardware solutions that limit AI adoption.

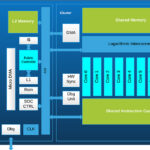

DeepliteRT builds upon the company’s existing inference optimization solutions, including Deeplite Neutrino, an intelligent optimization engine for Deep Neural Networks (DNNs) on edge devices where size, speed and power are often major challenges. Neutrino automatically optimizes DNN models for target resource constraints. Neutrino inputs large, initial DNN models that have been trained for a specific use case and understands the edge device constraints to deliver smaller, more efficient, and accurate models.

Leave a Reply