NVIDIA announced NVIDIA Omniverse Replicator, a powerful synthetic-data-generation engine that produces physically simulated synthetic data for training deep neural networks.

NVIDIA announced NVIDIA Omniverse Replicator, a powerful synthetic-data-generation engine that produces physically simulated synthetic data for training deep neural networks.

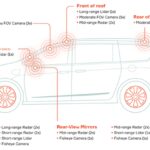

In its first implementations of the engine, the company introduced two applications for generating synthetic data: one for NVIDIA DRIVE Sim, a virtual world for hosting the digital twin of autonomous vehicles, and another for NVIDIA Isaac Sim, a virtual world for the digital twin of manipulation robots.

These two replicators allow developers to bootstrap AI models, fill real-world data gaps, and label the ground truth in ways humans cannot. Data generated in these virtual worlds can cover a broad range of diverse scenarios, including rare or dangerous conditions that can’t regularly or safely be experienced in the real world.

Autonomous vehicles and robots built using this data can master skills across an array of virtual environments before applying them in the physical world.

Omniverse Replicator augments costly, laborious human-labeled real-world data, which can be error-prone and incomplete, with the ability to create large and diverse physically accurate data tailored to the needs of AV and robotics developers. It also enables the generation of ground truth data that is difficult or impossible for humans to label, such as velocity, depth, occluded objects, adverse weather conditions, or tracking the movement of objects across sensors.

Already an invaluable data-generation engine for NVIDIA’s DRIVE autonomous vehicle and Isaac robotics teams, Omniverse Replicator will be made available next year to developers to build domain-specific data-generation engines.

Omniverse Replicator is part of NVIDIA Omniverse, a virtual world simulation and collaboration platform for 3D workflows. Learn more about Omniverse Replicator for DRIVE Sim and for Isaac Sim.

Leave a Reply