The short answer is no. Here’s why.

Solid-State Drives (SSDs) have no mechanical or moving parts, which makes them ideal for mobile devices. SSDs are associated with flash memory and as a replacement for hard disk drive storage for computers. At the lowest level, SSDs are based on transistors (similar to DRAM), but SSD is non-volatile memory, which means that data persists when power is removed. AT present, NAND-based flash memory is the technology in most widespread use for SSD-based storage drives.

As storage drives, SSDs have a higher cost per bit than the older platter-style hard drives. However, SSDs have much faster performance, primarily because they do not suffer from delays due to the mechanical operations associated with hard disk drives. Like hard drives, SSDs do many reads/writes in the course of a long lifetime. However, SSDs handle writes differently than one might expect, which creates some implications for file system design.

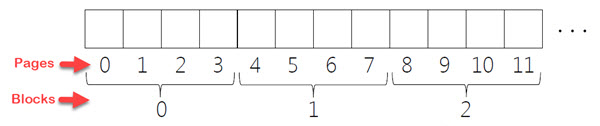

An SSD contains blocks made of “pages.” The size of each page is a few KB each, and each block is made up of many pages. A block might be 128 KB or 256 KB, with a fairly small page at 4 KB or so (see Figure 1). In order to write to a single page, you first have to erase the whole block. Another drawback of SSDs versus HDDs for storage is that, depending upon the type of SSD class storage, the block will fail after a few thousand to a few hundred thousand erasures have occurred if the SSD isn’t managed for even wear.

Reading an SSD page requires that the entire contents of the page are retrieved, which can take around 25 to 75 µs to complete. Unlike HDDs, the SSD retrieval time is independent of where the last retrieval of data occurred. No particular order of requests is more advantageous than another. To erase a block takes anywhere from 1.5 to 4.5 ms to complete. Erasing a block involves setting all the page contents in the block to all 1s. Programming requires writing to a page, but in this case, since all pages are all 1s, only select 0s need to be written to the pages in the block, taking as little as 200 µs to complete. Programming a block is faster than erasing a block but slower than reading one.

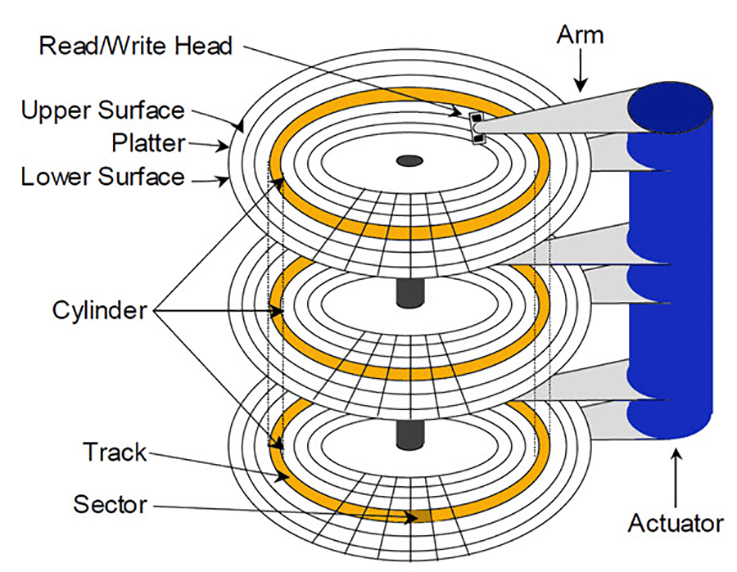

Compared to HDDs, however, SSDs are much faster, since an HDD has an average “seek” latency of 4 – 10 ms and a 2 – 7 ms average rotational latency (time required for the desired sector to reach the HDD head). Cache is used to improve data access time by attempting to predict what data will be needed next.

Although fast, SSDs do not eliminate the need for Random Access Memory (RAM) local to the CPU. One reason is that at present, DDR (e.g., DDR3, DDR4, etc.) is faster at present and may continue to improve along with SSD rates. (DDR stands for Double Data Rate, and is a type of memory used for a CPU’s RAM.) However, if all memory were equal, there would still be a limiting factor that would require RAM versus just hooking up a CPU directly to a petabyte (PB) of SSD.

The limiting factor to speed would still depend upon the physical location of the memory, in which case RAM (nearer to the CPU and limited in size), would always win. Another issue with solid state memory (e.g., flash, SSD, DDR3, etc.) is that the larger the memory bank, the longer it takes to find the needed data, as each piece of data is addressed within memory. Sheer size would make it so that addresses would need to increase in length to accommodate such a large monolithic chunk of memory. If the address of each memory unit were 32- or 64-bits long, the processor would need to read each (longer) address every single time it was to fetch an operand in memory.

Another advantage of SSDs is that they do not suffer the potential for damage to data like HDDs if dropped. Modern HDDs are governed by accelerometers so that if a laptop is dropped, for instance, the HDD is “parked” and the head removed from the platter as soon as forces are sensed and before the laptop hits the ground. However, HDDs are still in wide use because they are as much as ten times cheaper than similarly-sized SSDs.

1- SImply is it possible to allocate part of SSD drive to act as computer memory and eliminate using RAM in the computer? 2- Windows is able to use additional system flash drive as extra temporary space, Now if we can dedicate part of SSD as memory the problem with adding more RAM will be eliminated

It would be possible, but it would wear out the SSD fairly quickly in frequently used PC as they have usually around 150 TB writes lifetime. The RAM memory on the other hand works differently(only hold the data while powered on) and they don’t have write cycles specified(they last many many years)

Hello, I think the illustrations in figures 1 and 2 are swapped.

Excellent explanation.

Best regards,

Fernando Lichtschein is correct.

The Figures do not match the Figure descriptions—either the description or the image needs to be swapped.

Thank you, Chris and Fernando!