An AI governor is a framework, set of policies, or an oversight mechanism designed to ensure that the development and use of AI systems are ethical, safe, transparent, and compliant with legal and societal standards. The term can also refer to an actual piece of code or circuit (governor logic) used as a safety mechanism in a specific AI system.

PAI is implemented using motors, actuators, and sensors to provide a bridge between digital AI (DAI) and the real world. It can be used in public spaces like robotaxis, or protected spaces like autonomous robots in industrial operations or surgical assistance robots.

Governance for physical AI (PAI) differs from governance for generative AI and other DAI applications like chatbots, due to varied considerations related to safety, physical world interactions, accountability, and the potential for real-world damage and harm.

For purposes of analyzing governance requirements, PAI can be split into two categories: centralized or self-contained, and distributed applications. Distributed PAI (DPAI) can have higher levels of uncertainty and risk.

For example, the “existence problem” focuses on difficulties in creating systems that can reliably exist and operate safely outside of controlled digital simulations or factory settings.

The “social acceptance problem” of DPIA applications arises from concerns over trust, accountability, and the erosion of human autonomy and social interaction. Governance is needed to overcome both the existence and acceptance problems.

Overcoming the limitations of Cannikin’s Law can be more challenging. The development of PAI depends on at least 5 disciplines, including materials science, mechanical engineering, chemistry, biology, and computer science, with varying degrees of maturity and different development trajectories. Cannikin’s Law helps developers focus on the limitations of the “laggard” discipline to build robust, trustworthy AI by tackling the inherent challenge of ensuring diverse components work seamlessly, a vital aspect of modern AI governance (Figure 1).

Agentic AI governance

Traditional AI governance assumes predictability, is focused on pre-deployment checks, and supervised tasks. When considering dynamic, goal-oriented agentic AI systems that plan and act independently, governance requires continuous real-time oversight.

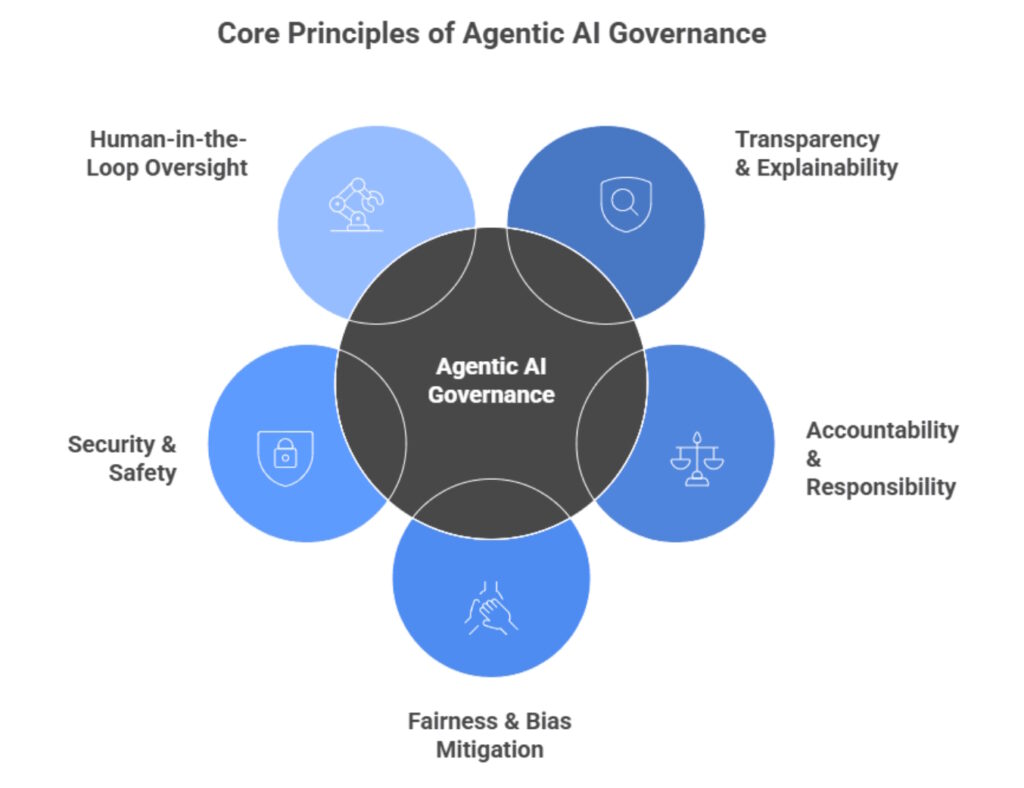

Some core considerations for governance of agentic AI include (Figure 2):

- Transparency must be supported with clear explanations of agentic AI decisions.

- Clear chains of responsibility are required for system design and for legal requirements.

- Continuous monitoring to ensure that the application does not drift toward discriminatory or biased behavior.

- Security needs range from protecting the application from adversarial attacks to protecting users from harm.

- Human-in-the-loop oversight can provide continuous training, collaboration, and intervention.

Figure 2. Some elements of agentic AI governance. (Image: kanerika)

Combining agentic and PAI

The combination of agentic and PAI complicates governance by transforming abstract risks into tangible physical harm. That can make it difficult to assign accountability for autonomous actions, manage unpredictable consequences, and ensure that physical systems adhere to evolving ethical and regulatory standards.

That requires more robust rules for safety, ethics, and control to align PAI activities with human intent. Some combinations are less dangerous than others. For example, smart building applications that combine agentic AI and PAI are more likely to cause discomfort rather than outright injuries.

On the other hand, applications like surgical assistance and collaborative industrial robots that work closely with humans have increased risks of causing physical harm to people and require tighter levels of control and governance.

Mandatory governance or recommendations?

Two different approaches to AI governance have been taken in the U.S. NIST AI risk management framework (RMF) and the European Union AI Act.

The EU AI Act directly regulates AI systems that interact with “physical or virtual environments.” That includes PAI as well as DAI. In the case of PAI, it focuses on robotics, autonomous vehicles, critical infrastructure like water systems and electric grids, medical devices, and industrial automation systems. It’s especially interested in protecting people from “high-risk” applications and has strict requirements for safety, transparency, and human oversight.

The NIST AI Risk Management Framework (AI RMF), on the other hand, is a structured, voluntary approach to AI governance without a specific focus on PAI. It’s designed to complement cybersecurity frameworks and adds specific AI risks. It takes a lifecycle approach and includes recommendations related to governing, mapping, measuring, and managing AI risks (Figure 3).

Summary

Governance is critical to establish trust that AI is safe, that its operation aligns with human ethics, and that it meets all regulatory requirements. Governance is implemented differently for DAI and PAI. It’s also implemented differently for centralized PAI and distributed PAI. Europe uses strict regulations while the U.S. follows a voluntary approach.

References

AI Governance Platform & Maturity Assessment, Regulativ

AI is here. Physical AI is coming fast, CIO

AI Risk Management Framework, NIST

Frameworks for Managing Physical AI in Public Spaces, mixflow.ai

How AI Governance Ensures Freedom in a Digitally Controlled World with 3 Key Aspect, CXO Transform

Navigating the NIST AI Risk Management Framework, hyperproof

Physical AI is helping us understand our world — not just automating it, World Economic Forum

The Decision Path to Control AI Risks Completely: Fundamental Control Mechanisms for AI Governance, arXiv

The Governance of Physical Artificial Intelligence, arXiv

What Is Agentic AI and Why Does It Matter for Edge Infrastructure?, Scale Computing

What is AI governance?, IBM

What is AI governance?, The Governors

Related EE World content

Are there any benefits from generative AI hallucinations?

What determines the size of the dataset needed to train an AI?

How do AI agents and model context protocol work together?

How can agentic AI be used in autonomous systems like EVs?

How is AI enabling the decarbonization of the utility grid?

Leave a Reply