In its most basic form, RISC-V is an open standard instruction set architecture (ISA) based on reduced instruction set computer (RISC) design principles. RISC-V is an open specification and platform; it is not an open-source processor. All other aspects of the RISC-V ecosystem build on that foundation. The hardware and software in the RISC-V ecosystem is extensive and includes:

- Open-source software

- Commercial software

- Open-source cores

- Commercial cores

- Inhouse cores

- Design and verification tools

Larger users of RISC-V may employ in-house developed cores in some designs and commercial cores in others. Some software may be commercially licensed, and some software will be open source. A benefit of using RISC-V is that all these variations comply with the same basic RISC-V specification, supporting a common software toolchain.

RISC-V needs a complete software ecosystem surrounding it in order to thrive. The ecosystem components are diverse, spreading across all layers from low-level firmware and boot loaders up to a fully functional operating system kernel, applications, and design and verification tools. Each of these components is important to ensure the success of RISC-V.

Today, over 100 RISC-V cores and over 40 RISC-V SoCs and SoC platforms are available, and the numbers are growing. While the RISC-V Foundation “is chartered to standardize, protect, and promote the free and open RISC-V instruction set architecture together with its hardware and software ecosystem for use in all computing devices,” numerous other organizations are building out various parts of the RISC-V ecosystem. The following are several examples of the extensive efforts underway.

RISC-V for energy-efficient systems

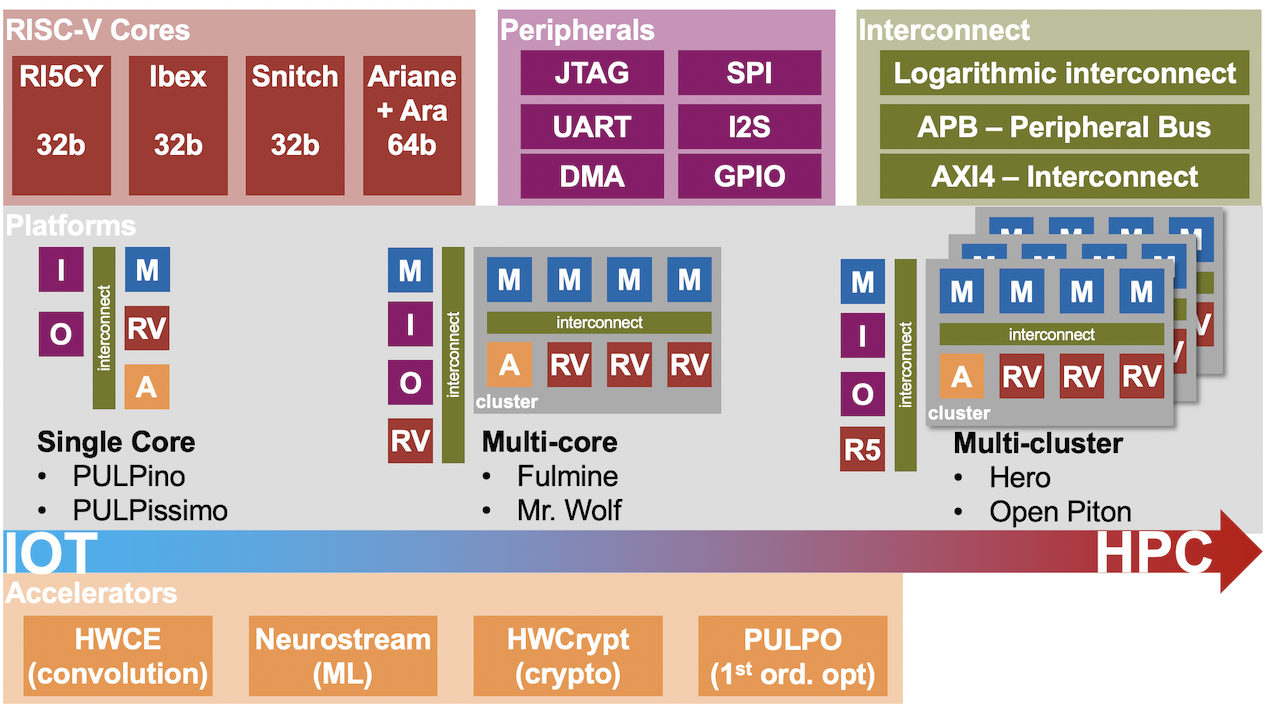

The Parallel Ultra Low Power (PULP) Platform is a joint effort between the Integrated Systems Laboratory (IIS) of ETH Zürich and the Energy-Efficient Embedded Systems (EEES) group of the University of Bologna to develop efficient architectures for ultra-low-power processing. PULP is an open-source platform. The efforts of PULP have resulted in a state-of-the-art microcontroller system and a multi-core platform able to achieve leading-edge energy-efficiency and widely-tunable performance.

PULP’s development of a parallel ultra-low-power programmable architecture enables systems to meet the computational requirements of IoT applications without exceeding the power envelope of a few milliwatts typical of miniaturized, battery-powered systems. PULP features:

- Efficient implementations of RISC-V cores

- 32-bit 4-stage core CV32E40P (formerly RI5CY)

- 64 bit 6-stage CVA6 (formerly Ariane)

- 32-bit 2-stage Ibex (formerly Zero-riscy)

- Complete systems

- single-core micro-controllers (PULPissimo, PULPino)

- multi-core IoT Processors (OpenPULP)

- multi-cluster heterogeneous accelerators (Hero)

- Open-source SolderPad license

- a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable license

- Peripherals

- I2C, SPI, HyperRAM, GPIO

Future development plans call for PULP to support multiple application programming interfaces such as OpenMP, OpenCL, and OpenVX that allow agile application porting, development, performance tuning, and debugging.

RISC-V for embedded computing

Various embedded computing applications are expected to provide large opportunities to leverage the capabilities of RISC-V. The CHIPS (Common Hardware for Interfaces, Processors and Systems) Alliance is an organization which develops and hosts open-source hardware code (IP cores), interconnect IP (physical and logical protocols), and open source software development tools for design verification. The Linux Foundation hosts the CHIPS Alliance to provide a collaborative environment to lower the cost of developing IP and tools for hardware development.

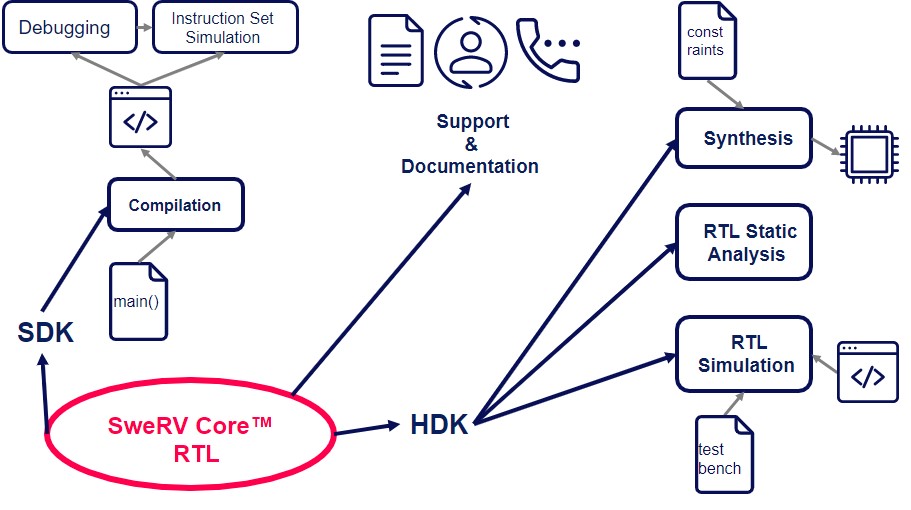

The SweRV cores are a good example of the diversity that is developing in the RISC-V ecosystem. Western Digital initially developed these RISC-V processor cores for use in their upcoming products. The RTL source of the cores is available free of charge from the CHIPS Alliance repository on GitHub or as a part of a comprehensive, ready-to-go Support Package from Codasip.

There several cores in the family: SweRV Core EH1 is a 32-bit, 2-way superscalar, 9 stage pipeline core. The SweRV Core EH2 was built off of the EH1 but adds dual threaded capability for additional performance. Lastly, the SweRV Core EL2 is a smaller core with moderate performance. It was designed to replace state machines and other logic functions in SoCs.

The SweRV Core EH1 is a high-performance core with a small footprint, suitable for embedded devices supporting data-intensive applications such as storage controllers, industrial IoT, real-time analytics in surveillance systems, and other smart systems. The SweRV Core Support Package includes:

- Integrated development environment (IDE), with open-source EDA tools and scripts for commercial tools set up and ready to use

- Register transfer level (RTL) designs including stable versions of the selected SweRV cores and an example SoC design (SweRVOlf)

- Software Development Tools including a compiler toolchain (GNU) and on-chip debugger (OpenOCD)

- Hardware Development Tools including open-source simulators (Verilator, Whisper ISS) and support for leading commercial simulators and synthesizers

- Complete documentation, samples, and libraries

- Technical support via online forums, e-mail, phone, and on-site

- Customization, optimization, and full verification of custom cores as on-request services

For designers that want a turn-key system to evaluate SweRV performance and features, CHIPS offers SweRVolf, a portable and extendable SoC reference platform. SweRVolf is designed as a base platform for designers to build upon. It can also teach SoC design, computer architecture, and embedded systems in an open-source environment. FuseSoC, another open-source project, powers SweRVolf. FuseSoC is a set of build tools for hardware description language (HDL) code and a package manager. It’s designed to increase the use of IP cores and to speed the creation, simulation, and fabrication of SoCs.

In another collaborative effort, RISC-V International and CHIPS Alliance are updating the OmniXtend Cache Coherency specification and protocol. Originally developed by Western Digital, OmniXtend is an open networking protocol for exchanging coherence messages directly with processor caches, memory controllers, and various accelerators. It’s designed to be an efficient way of attaching new accelerators, storage, and memory devices to RISC-V SoCs and can be used to create multi-socket RISC-V systems.

The creation of an open cache-coherent, unified memory standard for multicore architectures is the initial focus of the OmniXtend working group. Initial goals include making it easier for designers to implement the standard by updating the OmniXtend specification and protocol, building out architectural simulation models and a reference RTL implementation, and creation a verification workbench.

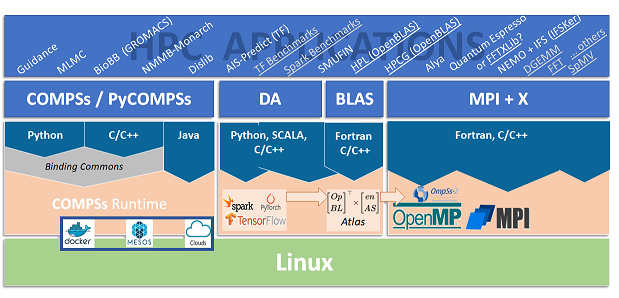

RISC-V for high-performance computing

For designers of exascale supercomputers and other high-performance computing (HPC) environments, the MareNostrum Exascale Emulation Platform (MEEP) provides a flexible FPGA-based environment to work with hardware/software codesigns. Improvement of the RISC-V ecosystem by developing a better software toolchain and a suite of HPC applications are key goals of MEEP. The group expects to play two roles within the RISC-V exascale computation ecosystem:

- Creation of an evaluation platform for pre-silicon development efforts, capable of balancing scalability and speed.

- Creation of a software development platform to overcome the limitations of current software simulation tools and accelerate HPC software maturity in the RISC-V environment.

MEEP will be based on European technology. It will develop new and reusable IP initially targeting FPGAs and later extending to ASICs. MEEP will have a strong focus on applications that have the highest impact on HPC systems and the strongest support in the RISC-V community.

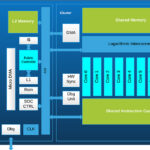

The vision for the MEEP FPGA chipset accelerator includes both memory and computation:

- A complex and comprehensive memory hierarchy from high bandwidth memory and intelligent controllers down to scratchpads and L1 caches.

- The computational engine will be a RISC-V vector and systolic (VAS) accelerator, in which the primary computational unit is called a VAS accelerator tile. Each VAS accelerator tile will be a multi-core RISC-V processor capable of handling scalar, vector, and systolic array instructions. And each VAS tile will include a scalar core and three coupled co-processors, one associated with the memory controller.

Design and verification

A significant benefit of using RISC-V is that all cores, whether based on the basic open-source RISC-V ISA, or in-house designs, or commercially licensed versions of the ISA, will meet the same RISC-V specification, ensuring the ability to use a common software toolchain.

The open-source nature of RISC-V gives designers many configuration and implementation options, plus the flexibility to add custom extensions and instructions. Compliance testing is necessary to confirm that all implementations conform to the specification. Compliance is less rigorous than full verification. Compliance testing does not exhaustively test all the functional details of a core; it simply confirms that the RTL implementation conforms with the ISA specification.

The RISC-V International Compliance Working Group (CWG) is a technical committee working toward developing a robust test framework, and the test suites themselves. The currently available tests include the free-to-use riscvOVPsim reference simulator that the CWG uses to develop the test suites. As an envelope simulation model, riscvOVPsim supports all of the configuration options of the ratified ISA specification, in addition to the draft specifications for bit manipulation and vectors. These tests will be extended as needed and are designed to form the basis of any test plan for a RISC-V processor to ensure compliance with the specification.

Summary

The components of the RISC-V ecosystem are diverse, spreading across all layers from low-level firmware and boot loaders up to a fully functional operating system kernel, applications, and design and verification tools. Numerous efforts target the needs of specific classes of applications ranging from ultra-low-power computing for the IoT to the vast range of embedded systems and even exascale supercomputing computing platforms.

References

CHIPS Alliance

FuseSoC

MEEP Project

PULP Platform

RISC-V International

Leave a Reply