Peripheral Component Interconnect Express (PCIe) is more than just a high-speed serial bus that’s widely used in computers. PCIe is also found in some embedded systems and is a cost-effective, high performance, reliable, low-latency, and low power bus that can rapidly transfer data directly between PCIe-connected devices. PCIe-connected devices are typically CPUs, GPUs, FPGAs, PCIe-connected I/O, and even solid-state storage; e.g., Non-Volatile Memory Express (NVMe). Additionally, PCIe has advanced features that enable more than just data transfers; PCIe can be used to selectively transfer data such that devices can be shared on a PCIe network.

Generation 4 PCIe can theoretically move 1969 MB/s per lane, and up to 32 lanes can be combined to move up to 31.5 GB/s. Gen 5 PCIe is expected to release in 2019 that will double the PCIe Gen 4 transfer rate per lane. PCIe is a mature technology that has kept up with data transfer demands for other technologies. PCIe is a stable, two-decades-old technology in extensive use, demonstrating low latencies down to 300 ns from end-to-end.

PCIe is in abundant use in many embedded industries as a modern I/O bus technology. PCIe is also attractive as a stable, dependable network with low latency for applications that call for real-time responses such as in automotive, industrial, or even financial transactions (for non-embedded devices).

Ethernet is a mainstay of communications, but Ethernet adds processor overhead and slows communication because Ethernet requires a protocol. PCIe is faster because devices can communicate directly peer-to-peer without using processor resources or memory. PCIe can also provide real-time action where latency is critical. PCIe has advanced features and can deliver data between components with latencies of under a microsecond. PCIe is very reliable and automatically incorporates error-checking, all while using very little power.

PCIe 4.0 products are already on the market, and the standard for PCIe Gen 5 is supposed to be released in 2019.

PCIe’s advanced features

PCIe can use internal DMA (Direct Memory Access) engines to move data around, lessening the load on processors. Using PCIe advanced features does not require you to become a PCIe expert, either. Vendor software can take advantage of advanced features within PCIe behind a simpler front end.

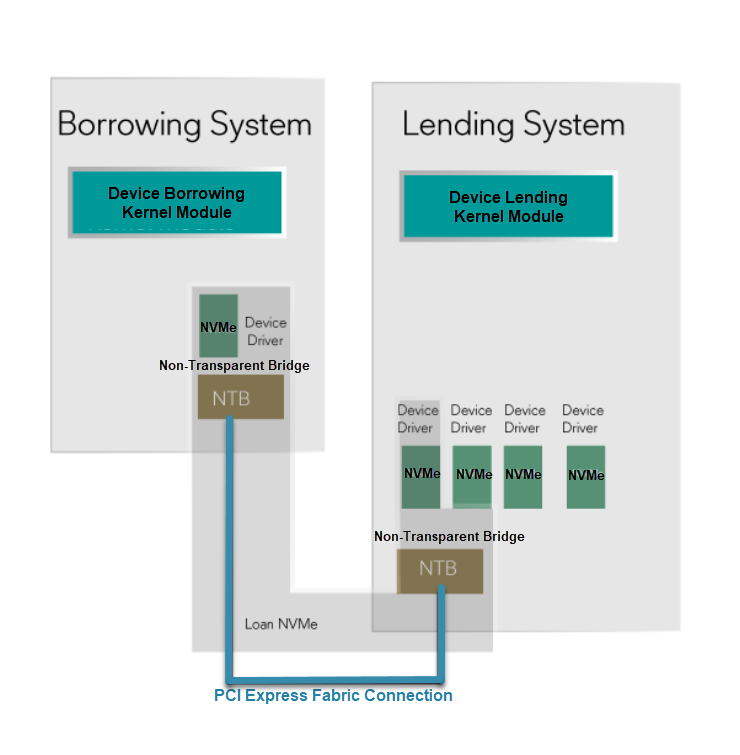

Software can also take advantage of PCIe’s non-transparent bridging function, creating an advanced PCIe-based fabric. A PCIe fabric enables advanced applications and flexibility. One example is to build “composable architectures” by adding and removing devices from multiple hosts within a PCIe fabric. In other words, software that takes advantage of the advanced features of PCIe can connect multiple CPUs, FPGAs, GPUs, and storage using a PCIe switch. For example, PCIe can be used to share one NVMe drive amongst several PCIe-resident devices (such as CPUs or GPUs) for data storage. Thus, advanced PCIe features can be used to create a PCIe Fabric that dynamically assigns resources to applications or systems by reallocating them from one system to another in microseconds.

Creating device lending capabilities or composable architectures using advanced PCIe features requires advanced software development capability. Although they might support PCIe, chip makers do not typically offer complete, advanced solutions using PCIe.

One example of software that creates a PCIe-enabled composable architecture for embedded systems is the eXpressWare Software Suite by Dolphin Interconnect Solutions. The software enables features such as adding devices to the PCIe network without shutting down (a.k.a., “hot add”) and their device lending software. Using device lending over PCIe, you can reallocate resources and reconfigure systems without having to manually implement advanced PCIe capabilities or add new modules and drivers.

Device lending via PCIe maximizes resources with low latency and little computing overhead. Device lending can add or remove a remote IO resource without requiring a physical device installation in a specific system on a network.

Smart I/O on PCIe

Intel introduced the first specification for the PCI bus in the early ‘90s. The PCI Special Interest Group (PCI-SIG) was formed so that computer manufacturers would have guidance for compliance to the standard. PCI Express (PCIe) was first released in 2003. In mid-2017, PCIe Generation 4.0 was announced.

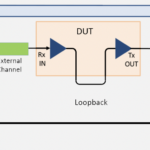

Dolphin’s software for device lending, the SmartIO suite, enables those unfamiliar with advanced PCIe features to use them. The software works seamlessly across the PCIe fabric, facilitating action between modules and devices without requiring modifications to drivers, existing software applications, or operating systems while preserving performance. Latency in accessing a remote device on the PCIe fabric is like that of a local device because of the lack of overhead in data transfers. Ethernet requires overhead associated with supporting the Ethernet protocol in each data transfer, adding latency. PCIe advanced features enable devices to be temporarily borrowed by any system or device within the same PCIe fabric for as long as it’s needed. Once a resource is no longer required, it gets returned to its original setup or is allocated to a different system.

Dolphin’s device lending software provides command line tools for issuing direct commands or you can integrate them into a higher-level resource management system. When accessing a remote device on the PCIe network, performance will be close to that of a local device. Device lending takes advantage of the intrinsic properties of PCIe’s advanced features and doesn’t require changes to a Linux kernel or APIs, for example.

PCIe is taken for granted by many, as it has been around for a long time and steadily updated along the way. Without awareness of the possibilities that PCIe’s advanced features can provide, opportunities for elegant solutions to everyday problems get set aside. However, the potential for cost savings is there when PCIe’s advanced features can remove the need for additional equipment and installation labor.

Leave a Reply