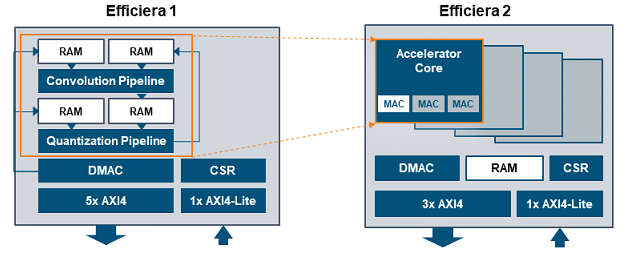

LeapMind Co., Ltd. announced version 2 of the ultra-low-power AI inference accelerator IP Efficiera, scheduled to be available in December 2021. Efficiera is highly valued for its power-saving, high performance, space-saving, and performance scalability features. Efficiera v2’s improved features and benefits expand the range of applications by providing wider coverage of performance with broader product availability while maintaining the circuit scale of the minimum configurations. These enhancements are based on learnings from the initial product introduction and market evaluation.

LeapMind Co., Ltd. announced version 2 of the ultra-low-power AI inference accelerator IP Efficiera, scheduled to be available in December 2021. Efficiera is highly valued for its power-saving, high performance, space-saving, and performance scalability features. Efficiera v2’s improved features and benefits expand the range of applications by providing wider coverage of performance with broader product availability while maintaining the circuit scale of the minimum configurations. These enhancements are based on learnings from the initial product introduction and market evaluation.

Efficiera is an ultra-low-power AI inference accelerator IP specialized for convolutional neural network (CNN) inference processing that runs as a circuit on field-programmable gate arrays (FPGA) or application-specific integrated circuit (ASIC) devices. The ultra-small quantization technology minimizes the number of quantization bits to 1 – 2 bits, maximizing the power and area efficiency of convolution that accounts for most of the inference processing without the need for advanced semiconductor manufacturing processes or special cell libraries. By using this product, deep learning functions can be incorporated into a variety of edge devices, including consumer electronics such as home appliances, industrial equipment such as construction machinery, surveillance cameras, broadcasting equipment, as well as small machines and robots that are constrained by power, cost, and thermals — all of which have been technically difficult in the past.

By improving design and verification methodology and reviewing the development process, product quality can be applied not only to FPGA but also to ASIC and ASSP. LeapMind starts to provide a model development environment, Network Development Kit (NDK) which enables users to develop deep learning models for Efficiera, which has not been done previously.

Leave a Reply