As we move toward 5G Advanced and 6G, the way we model the wireless medium is shifting. The old, standardized models are no longer sufficient. We need high-dimensional, site-specific systems, such as Multiple-Input Multiple-Output (MIMO), to work effectively. This FAQ will discuss how we are moving from those generic scenarios to what we call “neural surrogates,” all thanks to generative AI.

Q: Why are standardized 3GPP and deterministic ray-tracing models approaching their limits for 6G?

A: We have traditionally used standardized models such as 3GPP and WINNER to group environments into broad, high-level buckets, such as ‘Urban Macro’ or ‘Rural Macro.’ They are useful for establishing a general baseline during system design, but they do not really account for the specific RF quirks of an actual site. As a result, these models frequently fail to capture the unique multipath environment present at a specific city intersection or within an industrial facility.

While deterministic ray tracing gives you that site-specific detail, it is actually tough to pull off in the real world. You have to build a perfect 3D model of the space and know exactly what every material is to track the signal. Usually, it just ends up being too slow and using far too much data to be practical.

Generative AI acts as a middle ground. Instead of manually building 3D models or running massive measurement campaigns, we can use it to learn the statistical patterns of a wireless channel directly from noisy or compressed observations. As you see in Table 1, this physics-informed approach provides the much-needed site-specificity and flexibility without the traditional overhead.

Q: What is “Physics-Informed” modeling, and how does it ensure data integrity?

A: Earlier generative models mostly worked like black boxes, meaning they often produced results that didn’t make physical sense or just broke when you tried them in a different setup. Today, we have better methods, such as Sparse Bayesian Generative Modeling (SBGM), that address this by building physical rules directly into the AI.

By using the natural patterns of wave propagation, SBGM ensures the generated channels actually follow the laws of physics. It uses specific math, like conditional zero-means and Toeplitz structures, to keep things grounded. This keeps the AI from hallucinating impossible data, so the synthetic channels you get are actually reliable for testing your baseband equipment.

Q: Why is the industry moving from GANs to Diffusion Models for channel synthesis?

A: Generative Adversarial Networks (GANs) used to be the go-to tool for early modeling, but they are unstable and often run into “mode collapse.” This is basically when the AI gets stuck repeating just a few types of samples, so it misses the full variety of the real world. Now we have Denoising Diffusion Probabilistic Models, which are much more stable. They use a U-Net architecture to slowly turn Gaussian noise into high-quality channel data.

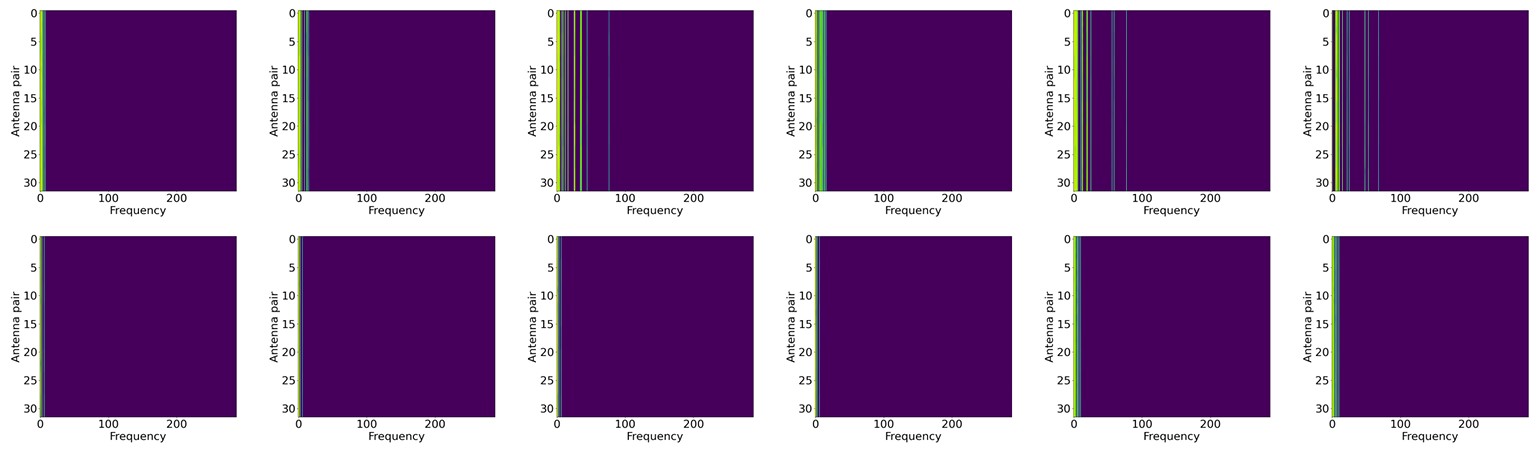

If you look at Figure 1, you will see that diffusion models do a much better job of capturing the true variety of a MIMO setup. GANs can end up looking repetitive, but diffusion models give us realistic samples for every possible condition. This variety is key when you need to stress-test your baseband algorithms against those weird edge cases and deep fading events.

Q: How does GenAI facilitate instantaneous Channel State Information (CSI) recovery?

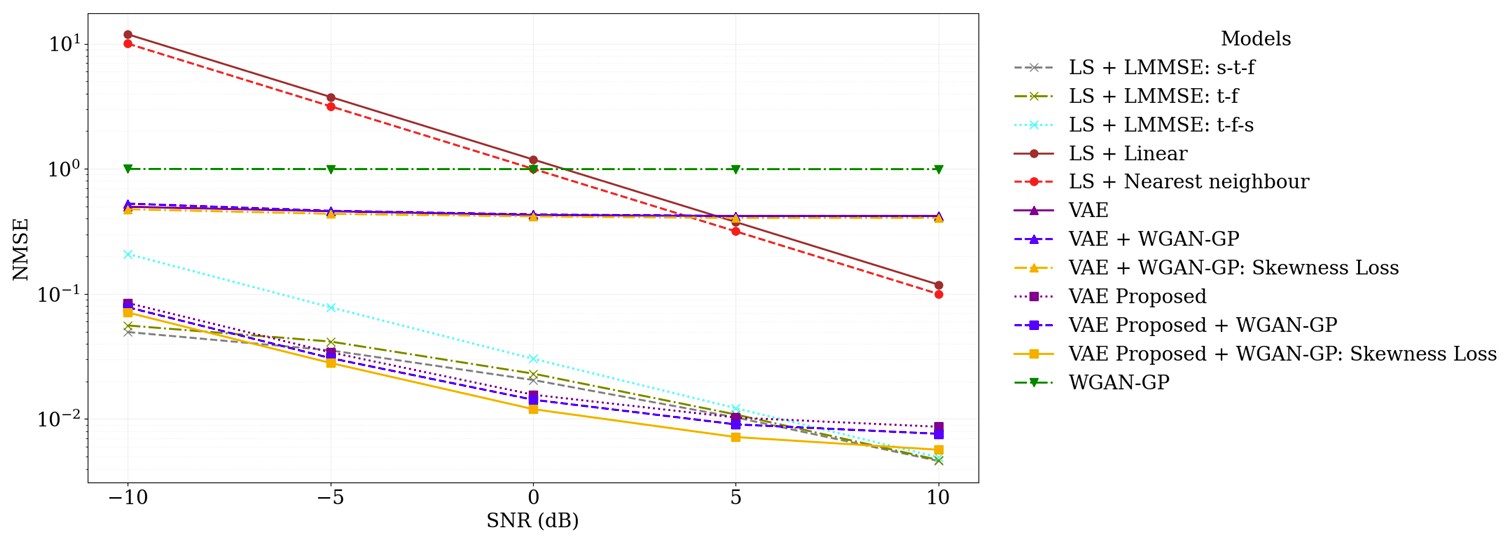

A: When we look at 5G New Radio, dealing with all those variables across time, frequency, and space makes life really difficult for traditional estimators like Least Squares (LS). They often just can’t keep up when things become mobile or noisy. That is where generative frameworks like the VAE-WGAN-GP hybrid come in.

By looking at the data skewness, they remain much more stable during training. The goal is to close the gap between the real and estimated channels, and as shown in Figure 2, this process leads to much lower error rates in noisy environments. Basically, these AI priors enable baseband processors to clearly see the signal, even when it’s buried under a ton of noise.

Q: What is the operational impact of this technology in Industry 4.0 environments?

A: You can really see the value of generative modeling in places like automated smart warehouses. These spots are full of moving metal shelves that create unpredictable reflections and interference, and standard static planning normally cannot keep up with those rapid changes. That is where a model like Evo-WISVA deals with the complexity. It pairs a memory-augmented VAE with a Convolutional LSTM to forecast SINR heatmaps, just as a weather map predicts rain.

As shown in Figure 3, these predictions stay very close to the actual ground truth. As such, the system enables proactive management, allowing autonomous robots to adjust their routes and avoid dead zones before they occur. Though the environment is messy, this predictive capacity constitutes a real foundation for ultra-reliable low-latency communication.

Summary

We are seeing a fundamental shift in how we handle wireless engineering. We are transitioning from universal generic models to “neural surrogates” that truly comprehend the physics of a particular room or street corner.

By using things like diffusion models and physics-informed constraints, we can stop guessing how a channel might behave and start predicting it with real precision. Whether it’s cutting down on estimation errors at the baseband or keeping a warehouse robot connected as it zips between metal shelves, generative AI is making our networks smarter, more reliable, and a whole lot more site-specific.

References

Memory-Augmented Generative AI for Real-time Wireless Prediction in Dynamic Industrial Environments, arXiv

Generative Diffusion Models for Radio Wireless Channel Modelling and Sampling, arXiv

Generative and Explainable AI for High-Dimensional Channel Estimation, arXiv

Physics-Informed Generative Modeling of Wireless Channels, arXiv

EEWorld Online related content

Antennas to bits: Modeling real-world behavior in RF and wireless systems

How to test 5G: From millimeter-wave to massive MIMO to beamforming

How AI spurs efficient wireless systems design

5G and AI: How they complement each other

Engineers use AI/ML to improve test

Creating 5G massive MIMO: Part 1

Leave a Reply